Founder & CEO of solCme

Shahar Maoz

Musician. Developer. Founder.

I'm building

a digital rehabilitation platform that uses computer vision to

turn body movement into sound.

a digital rehabilitation platform that uses computer vision to

turn body movement into sound.

The platform captures movement through a standard webcam,

translates it into musical control, and generates clinical

rehabilitation data in real time.

Rehabilitation exercises are repetitive and hard to stick with.

Therapists lack objective data between sessions.

solCme solves both problems at once.

Movement as Input

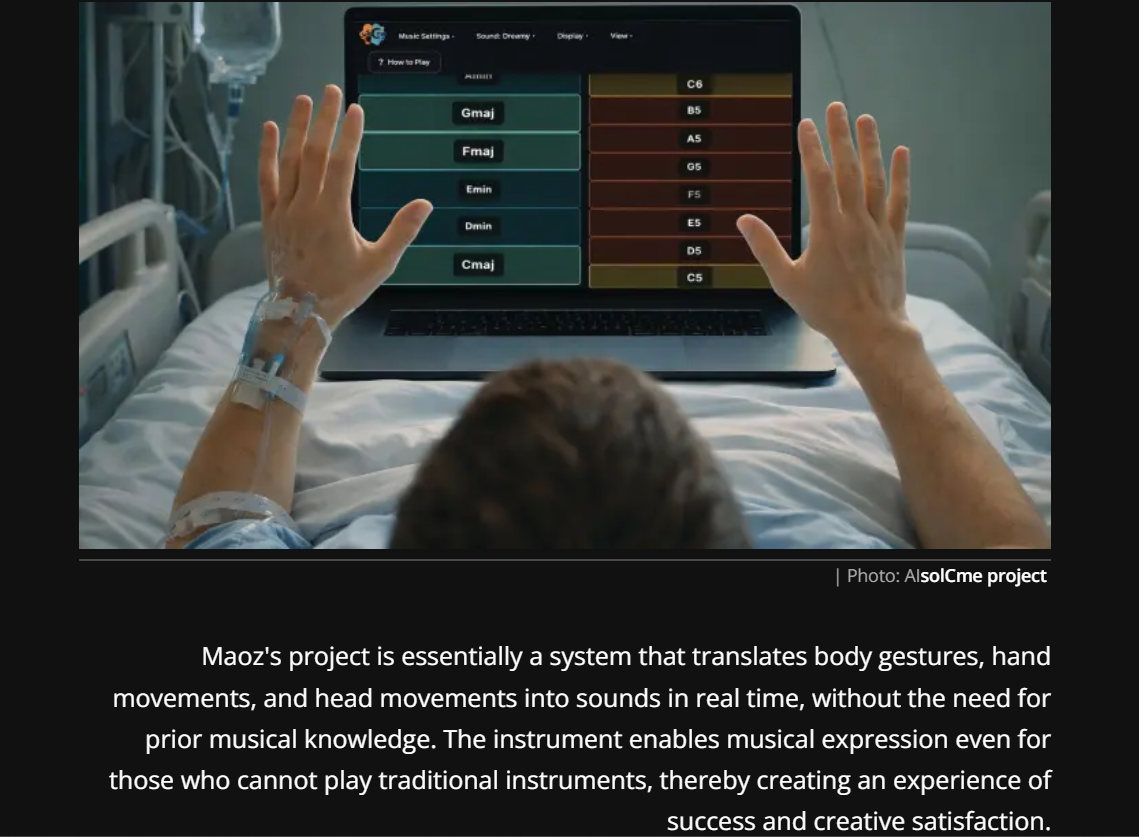

Our instruments adapt to the player, not the other way around. A head tilt, a finger gesture, a facial expression. Any controlled movement can produce sound.

Clinical-Grade Data

Each session automatically captures range of motion, stability, fatigue, and consistency. Therapists get structured reports without extra documentation work.

Patient Motivation

Music gives patients a reason to repeat their exercises. Relevant for stroke recovery, pediatric autism therapy, and cognitive decline.

is a digital rehabilitation platform that translates body

movement into musical expression using computer vision. No

wearables, no special hardware. Just a webcam.

is a digital rehabilitation platform that translates body

movement into musical expression using computer vision. No

wearables, no special hardware. Just a webcam.

The system creates a feedback loop: movement produces sound in

real time, while the platform logs clinical metrics for the

therapist.

HandSynth

HeadSynth

Data Dashboard

I'm a full-stack developer and classical guitarist from Israel. For over 15 years I performed, taught, and worked with music in therapeutic settings, including autistic children, motor rehabilitation patients, and elderly populations.

I taught myself Python during a long hospitalization, then completed full-stack training at John Bryce. That period gave me both technical skills and a personal understanding of motor rehabilitation from the patient side.

Now I build systems that connect

computer vision, sound synthesis, and clinical

measurement. That combination is what

is built on.

is built on.

Used music therapeutically with rehabilitation populations.

Open to clinical partnerships, pilot programs, and conversations about accessible music technology.